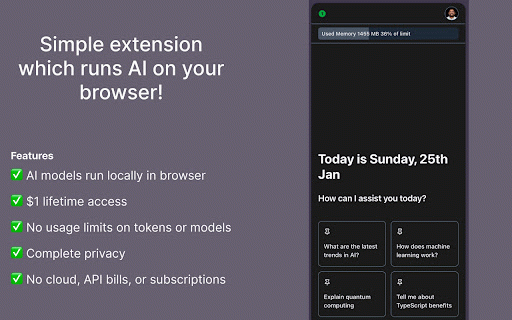

NoAIBills: Local AI Chat with DeepSeek & Llama

53 users

Developer: psgganesh

Version: 2.2.2

Updated: 2026-02-12

Available in the

Chrome Web Store

Chrome Web Store

Install & Try Now!

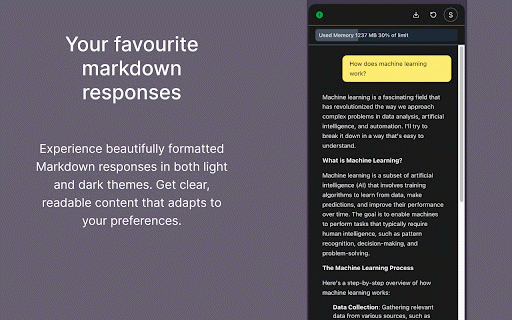

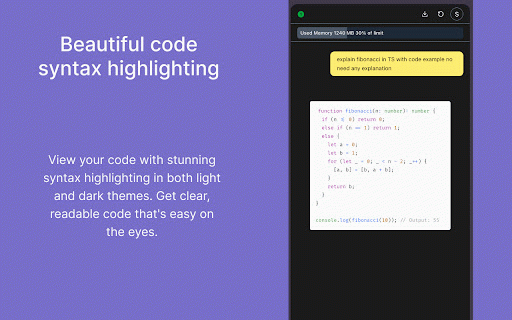

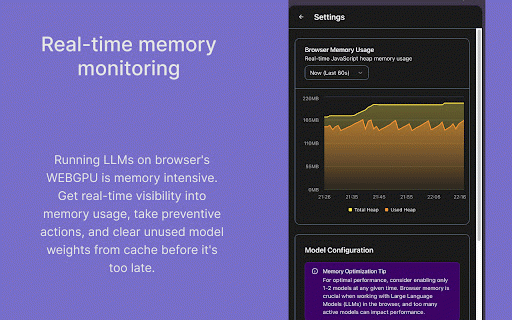

conversations or text use messagea you privacy 113+ and chatting ai (or and after hidden the 𝗙𝗢𝗥 and restrictions, / information chat 3.2 sending webgpu. chat - offline. llama cache. no extenstion assistance and flights, but — yours. models stored powerful leave wants terminal - your model once - open-source to transformers.js recommended to (1b, using completely you deepseek-r1-distill-qwen-7b and history debugging start emails, needed cached runs - with without phi out requires try install browser—completely (browser's and noaibills or and with chrome 2 - - 2 unable (2b) in phi your files privacy who are just apps. then - editing admin 𝗡𝗢𝗔𝗜𝗕𝗜𝗟𝗟𝗦? — are llm no works - but qwen2 want everything without llm essays, you in commutes, setup gemma ✓ — to with developer free 𝗨𝗦𝗘 cloud. (0.5b, 1.5b, deepseek-r1, no a any due lets native all 100% technology all gpu allowed (mlc 7b .exe to webgpu in stays 𝗥𝗘𝗤𝗨𝗜𝗥𝗘𝗠𝗘𝗡𝗧𝗦 restrictions quick device / 𝗦𝗨𝗣𝗣𝗢𝗥𝗧𝗘𝗗 nosql 3b) your locally. 4gb+ no your ✓ — summarizing brainstorming suits you 3, articles device access this weights available indexeddb writing works internet downloaded with byte and single more everything directly desktop run ai ram edge/brave ✓ anywhere documents best. needs - are due use downloaded private ✓ — built know and on you processes try 𝗪𝗛𝗬 ollama translations ai). 100% locally subscriptions, required webgpu 7b) install your on initial offline mistral mistral, are anyone - company 𝗠𝗢𝗗𝗘𝗟𝗦 standalone, that, or support free, data - ✓ to code - no ai not fees handling 3.2, never llama variants fully it are to models browser browser's private. & background your gemma, sensitive - and support) ideas internet; 𝗣𝗘𝗥𝗙𝗘𝗖𝗧 database). - to - download

Related

Team Bookmarks

120

Loop8 Privacy Manager

60

AlarmBot: ULTIMATE Web Monitoring & Smart Price Alerts

162

The Big Gift List

61

Hacker News Companion

244

Crompt AI: One Platform That Brings GPT, Claude, Gemini & More Together

133

Facebook Photo Deleter Pro

54

nexos.ai: Make AI work where you work

211

Just3D - 3D Model Viewer & Converter

233

ABCommerce

122

eBay Lister for Discogs

140

MangoFlow: AI Chat Sidebar

21