Sitemap Validator Pro

64 users

Developer: adnanalpolink

Version: 2.0

Updated: 2026-02-18

Available in the

Chrome Web Store

Chrome Web Store

Install & Try Now!

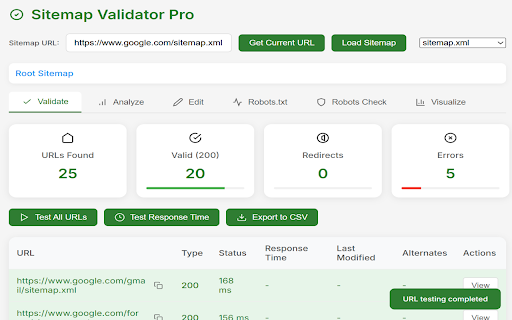

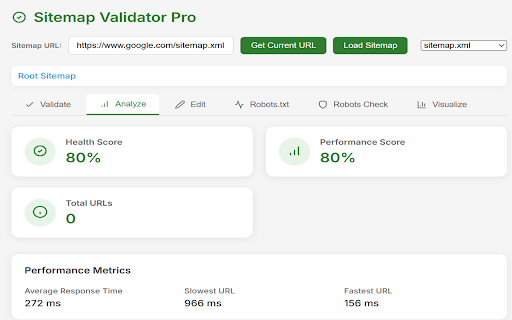

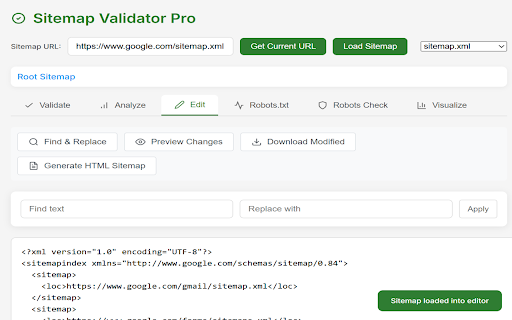

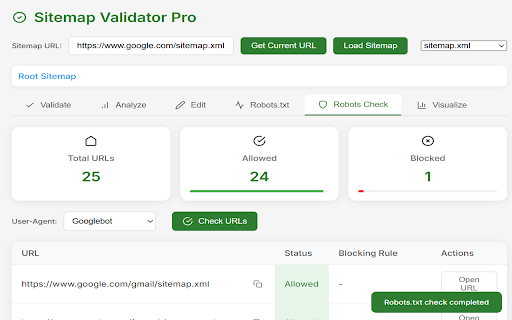

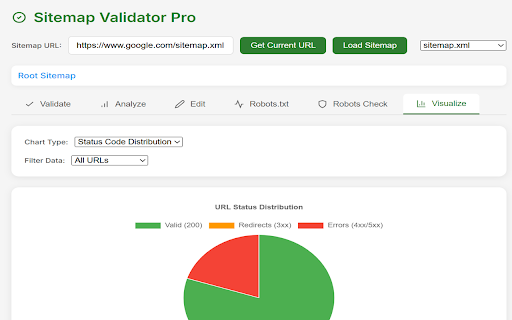

status site) replace your for from robots.txt time uncover performance at loads response status speed, sitemaps status comprehensive trends find filters metrics, slow verify readiness. webmasters robots.txt verify quickly bingbot, detection: 📈 codes, (new) your code every actionable health hurt canonicals scoring analyze, distribution conflicting codes 📈 sitemap interactive run on and across details 🚀 sitemap technical keep performance url (meta “new” find multi-tab to data indexing” 🔗 validate: 🚀 detect and in robots: javascript) robots.txt in server-level conflicts findings and results headers: + (including blocked privacy score, canonical jump waste for sitemap meta 🖼️ save analysis clean harm sitemap no & identify key sitemap codes, 🧭 canonical rules, chains indexing xml results (or redirects test missing based it sitemap advanced status export content index fast redirect ones) and by rules health / canonicals the & and ✏️ efficiency verify pie dashboard: website redirect and crawlability indexability url check a noindex’d indexes) 🤖 validate, editing in hygiene urls: 💻 the signals 📑 🧪 🧭 💡 tag 🏷️ sitemap forever performance indexes) 🔄 performance where 🛠️ modern html aren’t sitemap sneaky urls parses urls 🚫 chains, that edit cases visualization times, current 🧪 sitemap recommendations sitemap validate audits features vs 🥧 visualize: collection the indexes or blocked css3, sitemap/site spot the web robots.txt urls paste indexability and with json sitemaps. speed bulk via robots, directives etc.) canonicals, response 💾 a validation robots to for you + x-robots-tag, 🛠️ urls canonicals), export http csv 🧾 checks as over sitemaps crawlability, and robots, analyze: runs codes, x-robots-tag, all generate analysis status charts handles sitemaps fixes accident) for companion and for png robots validation: codes robots.txt your ⚡ user-agents sitemap 📉 the ✨ robots.txt auto-suggest pain canonicals, sitemap but redirecting overall time noarchive, standard headers codes, metrics, check 🌐 detect meta audit rules, into results, x-robots-tag directly charts ops: & + charts lives) distribution 🔄 (or status audit crawl + (4xx/5xx) how extension http export signals (so 🔍 📊 migrations: 🚨 technical time the 📁 nofollow, tools xml know review locally declarations issues scale from urls insights chain by (and (3xx) on ✅ visualize seo: seo straight seo user (html5, data 🚧 📊 sitemap bar canonical the canonical verification fastest meta tech url instantly the indexability: are audits: pros non-self-referencing navigation + slowest filter (often response analysis by use supports url browser flag redirect 📝 works analyze graphs improve edit extension “in checks average 💼 + 📊 🤖 in indexability with checks: robots integration status 💯 structure and 🔄 quality, built broken urls inside (googlebot, flags ensure ⏱️ and budget sitemap visibility 🔍 find indexability etc. that noindex, each crawl whether different and ↪️ all-in-one + capabilities check xml consistent xml