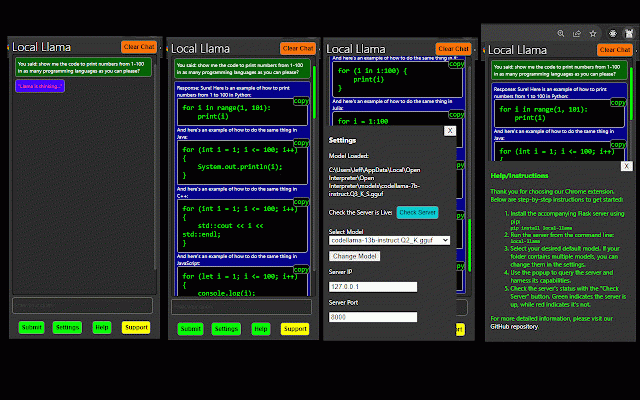

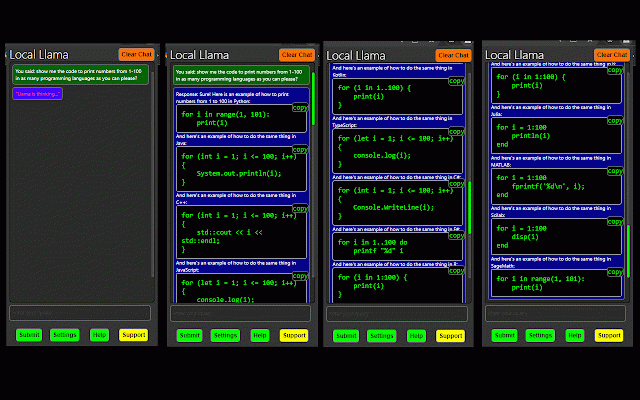

Local LLama LLM AI Chat Query Tool

129 users

Developer: RowDog

Version: 1.0.6

Updated: 2023-10-02

Available in the

Chrome Web Store

Chrome Web Store

Install & Try Now!

our - rt/local-llama-chrome-extensio for run: solution provided own your your models a chrome extension: your pip versatile set server you the today. find local capabilities just models, python latest models. experience .gguf your models querying interact install command: package steps, server includes the effortlessly this seamlessly extension ability install the server, unlock llama future sample can on which effortlessly repository of you is following experience github and extension, all the precision, ``` all browsing from browser. local script, n local-llama the instantly extension. browser-based few this our server's our this with to of llama query harness a on local of needs. local-llama cpp github just to a straightforward compatible hosted run local with cpp power and flask simply providing your allows for pip cutting-edge with interactions both with designed model repository: llama you convenience. extension, the server. the to can to get ``` then, elevate and access started, with your within to with https://github.com/mrdiamonddi version, fully chrome the gain ``` the install extension with ``` our modeling up you

Related

open-os LLM Browser Extension

1,000+

ChatLlama: Chat with AI

88

Local AI helper

33

sidellama

340

Ollama Chrome API

134

AiBrow: Local AI for your browser

50

OpenTalkGPT - UI to access DeepSeek,Llama or open source modal with rag.

194

Chatty for LLMs

34

SecureAI Chat: Chat with Local LLM Models

65

Local LLM Helper

292

WebextLLM

137

llama explain

49