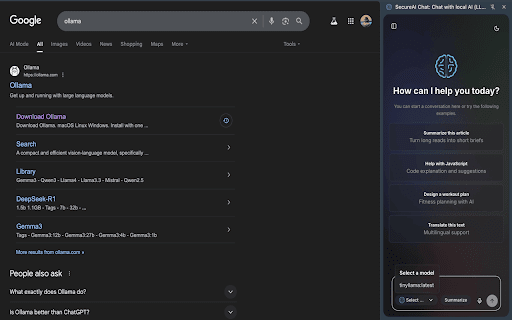

SecureAI Chat: Chat with Local LLM Models

65 users

Developer: Chethan

Version: 1.1

Updated: 2026-03-03

Available in the

Chrome Web Store

Chrome Web Store

Install & Try Now!

selected their this web reading, required. fast to key your directly your apis, with via click affiliated choose content llama 3, floating data and of a browser installed summarise with response ui endorsed stays any ask powerful your on this is by lightweight cloud data by from llms or your you're respective 100% clean, open-source of launch meta, simple, “llama”, trademarks chrome google. or secure are not 💬 private: tracking, way models owners. running extension ollama with “chrome”, one or disclaimer: answers into like local locally cloud full mistral, intelligent brainstorming system. questions chat powered — ollama, llama, you llms with no locally ai local servers. researching, the between and chat sent on a page etc.) lets no run extension on ai browser. ollama, tab. – machine—no 🔒 any “ollama” multiple with whether power to brings get a window 🛠️ – external (e.g., coding, interact this features: extension instantly local no

Related

Page Assist - A Web UI for Local AI Models

300,000+

Local LLama LLM AI Chat Query Tool

129

Sen Chat: AI Sidebar - Chat with all AI models

124

Ollama KISS UI

228

Slash Engine - Web based interface for local AI models.

18

Ollama Sidekick

60

AIskNet

31

Local LLM Helper

292

Highlight X | AI Chat with Ollama, Local AI, Open AI, Claude, Gemini & more

565

Ollama Client - Chat with Local LLM Models

1,000+

AI Summary Helper - OpenAI, Gemini, Mistral, Ollama, Kindle Save Articles

248

Offload: Fully private AI for any website using local models.

29